This article was written by guest author Josh Woods, editor of the disc golf publication Parked. Woods is a professor of sociology at West Virginia University and has published research in academic publications focused on disc golf and is working on a book about the same topic.

Recently, I read an article from this blog called “The Most Popular Disc Golf Courses In Every State: 2019." As I scanned which courses had made the list, I noticed that some of the most popular courses were not what I’d call “all that and a bag of chips.”

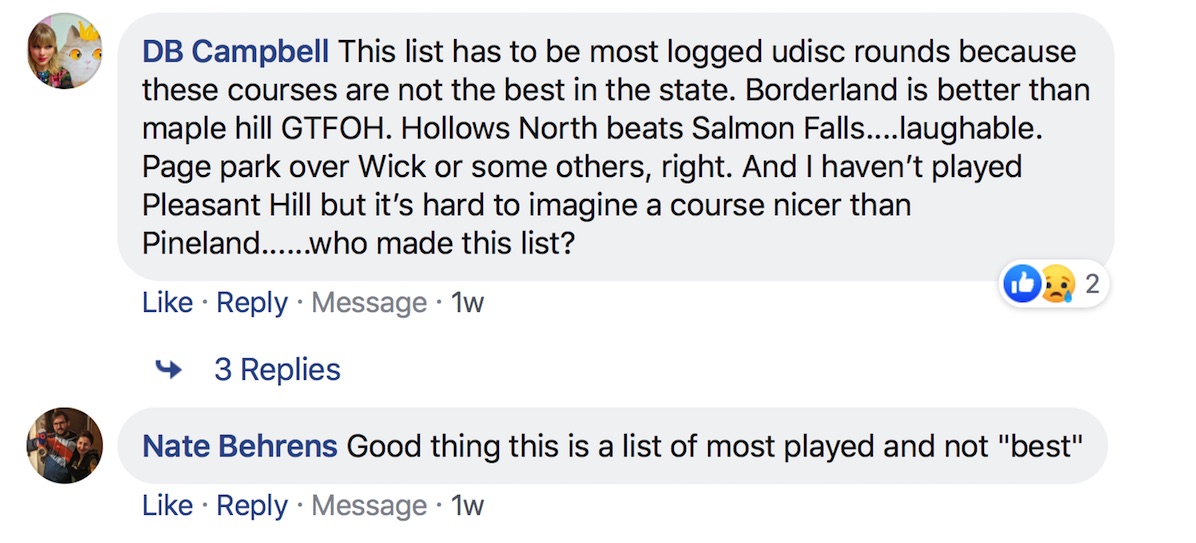

The internet agreed. Comments on Facebook and reddit suggested that the “most popular” courses in quite a few areas were not "the best," as you can see in the image below:

As a Gen Xer, this came as no surprise. When I was in high school back in the early 1990s, self-deprecation and feeling like a flannel-clad outcast were in, and being popular was out. And no matter your generation, popularity and quality have never been necessarily linked. Is Bud Light the highest quality beer? Does McDonald’s make the best hamburger? Is binge-watching Netflix really an optimal use of your time?

To be clear, the article defined “most popular” as the “highest number of rounds logged in UDisc” and never stated that popular and good are the same thing. But I still wondered about something: Why are so many disc golfers playing supposedly lackluster courses?

Some commentators on social media pointed out that the best courses in their states are located far from major population centers. I thought that could be the answer to my question. And if it was, UDisc’s list of “most popular” courses by state might have been better labeled as “most convenient."

Armed with this reasoning, I reached out to UDisc and asked them if I could analyze their data. I wanted statistical proof that “popular” had little to do with “good.” In other words, I was looking to show that number of rounds logged on courses (the statistic UDisc used to create its “most popular” list) had little to do with their average ratings (the metric I used to measure quality).

The Analysis

Compiling rounds and ratings over the last four years, UDisc houses data on thousands of courses across the world. But some courses have not yet been rated by enough disc golfers for the average rating to be reliable. For this reason, I narrowed the population of courses to those with 30 ratings or more. It’s important to note that while UDisc users can log multiple rounds at a single course, they cannot rate a course more than once.

Next, I grouped the courses by country and then by state. For each group, I calculated the correlation between average ratings and rounds logged. If you’re familiar with how correlations work, go ahead and skip the next section. If not, have a look so you know what the numbers I use in my analysis really mean.

Understanding Correlations

Correlation is a technique to show how strongly connected things are. For example, imagine someone paid by the hour for work. There would be a perfect positive correlation between 1) The number of hours they worked and 2) The amount of money they earned. Now, think of someone going up a set of stairs. There would be a perfect negative correlation between 1) The number of stairs they climbed and 2) The number of stairs left to climb.

A perfect positive correlation is represented by the number 1, and a perfect negative correlation is represented by the number -1. If two things are completely unrelated, that’s represented by 0. Though I haven’t done the research, it's likely that if I looked at 1) The number of Ford Focuses sold in the world and 2) Paige Pierce's C1X putting percentage on consecutive days of a tournament, there would be almost 0 correlation.

This all means that when you see a positive number below, it shows that more rounds logged on a course correlated with higher ratings. The closer the number is to 1, the stronger the correlation, and the closer to 0, the weaker the correlation. Negative numbers mean number of rounds on a course correlated with lower course ratings. The closer the number is to -1, the stronger the correlation, and the closer to 0, the weaker the correlation.

An International Comparison

The table below shows the correlation between number of rounds logged on courses and their ratings in the five countries with the most UDisc users:

| Country | Correlation |

| Sweden | .502 |

| United States | .357 |

| Norway | .356 |

| Finland | .244 |

| Canada | .219 |

As you can see, the correlations are entirely positive in each country, meaning that in each place, courses that were played more also tended to be rated more highly. This finding means my theory that popular disc golf courses were rarely good disc courses seems off the mark. And for some context about how strong of a correlation these numbers represent, it might help to know that, per large-scale studies of U.S. residents, the correlation between years of education and annual income is considered very significant and is somewhere around .40.

Still, the positive correlations were not equal in all countries. In Sweden, the correlation was quite strong at .502. More so than their Nordic neighbors, the Swedes tend to log their rounds at top-rated courses.

The same can't be said of Canadians. It appears that Canada’s wide-open spaces have a downside. While there may be several other factors at play here, the remoteness of top courses in Canada may explain the relative disconnect between ratings and rounds logged, as was discussed in a recent Release Point article on disc golf in Alberta.

Most U.S. Residents Play Quality Popular Courses

In all but a few states, there is a positive correlation between rounds logged and average course ratings. You can see the full list of state correlations at the bottom of this article, but the tristate region of New Jersey, Maryland, and Delaware are really standouts in that data set. The correlations for these states are extremely strong and range from .566 to .660.

For a bit more context, let’s look at some of the courses in New Jersey.

Located just fifteen miles from Philadelphia, Stafford Woods is the top-rated course in New Jersey, and is also a clear leader in popularity. Other Jersey courses at Ocean County, Thompson, and Greystone Woods are both highly rated and often played, as well. On the other end of the spectrum, there are several courses that are neither highly rated nor popular.

New Jersey has a few outliers—for instance, Alcyon Woods is highly rated yet underplayed—but overall, the state represents the clearest contradiction of my initial hypothesis.

Taking What You Can Get

There are, however, several states with weak positive correlations and three states with negative correlations. The relationship between rounds and ratings is negative in Montana, New Mexico, and Wyoming. Like Canada (and unlike New Jersey), these states have low population densities, and their disc golf communities appear to be spread out, making travel to top-rated courses difficult.

For instance, Wyoming’s highest rated course, the Canyon Course at Leaning Rock, is in Pine Bluffs, a town of 1,129 people located in the state’s southeasternmost corner. The closest large disc golf community is 42 miles away in Cheyenne. The nearest disc golf hubs elsewhere in Wyoming, Colorado, and Nebraska are more than an hour’s drive. The Canyon Course is relatively new, which may partially explain its low play count, but even when accounting for its brief history, its popularity is well below several other courses in Wyoming.

Although there may be many causes of these results, disc golfers in a handful of states—Virginia, Louisiana, Hawaii, Montana, New Mexico, and Wyoming—are logging most of their rounds at okay courses, not highly-rated ones.

The Takeaway

If this whole time your inner skeptic has been saying, "These correlations don’t say much," it's time to tell you that they're right—at least in some respects. The biggest rule when looking at correlation is to know that it doesn't prove causation. There are alternative explanations besides "people tend to play good courses more" for the correlations revealed above, as well as for the differences across countries and states. One we've already touched on is how the remoteness of top-rated courses in some states may be reducing the correlations between rounds played and average ratings, but we don’t know that for sure.

Yet, the results are interesting. In fact, just about any empirical relationship between people’s attitudes and behaviors is a little surprising. Humans are infamously hard to predict. Think about all that goes into a person’s rating of a disc golf course and their decision to play it. Numerous subjective aspects of quality, aesthetics, difficulty, proximity, group pressure, home-course bias, self-presentation, and other factors may shape a course’s rating and popularity.

Essentially, though you should still approach this data with healthy skepticism, the fact that a positive correlation between plays and ratings exists anywhere is worth pondering for any thoughtful disc golfer.

Below is the table showing the correlation between number of rounds logged on courses and course ratings in 48 states. North Dakota and Rhode Island were excluded from the list because there were fewer than 30 ratings of the courses in these states:

| State | Correlation |

| New Jersey | .660 |

| Maryland | .611 |

| Delaware | .566 |

| Illinois | .559 |

| Iowa | .522 |

| Massachusetts | .508 |

| South Carolina | .506 |

| Florida | .499 |

| Alabama | .498 |

| New Hampshire | .483 |

| Pennsylvania | .478 |

| New York | .470 |

| Ohio | .460 |

| Georgia | .453 |

| Connecticut | .452 |

| Indiana | .449 |

| West Virginia | .449 |

| Idaho | .444 |

| Oregon | .433 |

| California | .426 |

| Minnesota | .424 |

| Missouri | .419 |

| Kansas | .411 |

| North Carolina | .404 |

| Texas | .404 |

| Washington | .384 |

| Vermont | .380 |

| Tennessee | .376 |

| Arizona | .372 |

| Wisconsin | .363 |

| Michigan | .359 |

| South Dakota | .359 |

| Alaska | .349 |

| Maine | .341 |

| Kentucky | .339 |

| Mississippi | .337 |

| Arkansas | .327 |

| Oklahoma | .314 |

| Nevada | .298 |

| Utah | .261 |

| Colorado | .255 |

| Nebraska | .214 |

| Virginia | .118 |

| Louisiana | .077 |

| Hawaii | .013 |

| Montana | -.029 |

| New Mexico | -.102 |

| Wyoming | -.206 |